I started learning the abacus. Now I have questions about AI

On struggle, shortcuts, and what you're actually saving time for

CAREER & PRODUCTIVITY

3/18/20268 min read

My mother in law knows I’m a nerd.

She once gave me an abacus as a gift. Most people would probably be confused, even if they were too gracious to show it.

I straddle both ends of the spectrum depending on the day, but I notice both extremes are uncomfortable places to sit. It's tiring being on edge about losing myself and my job all the time, and frustrating when I'm not ascending to dietyhood like folks are promising.

I do want to point out one gap in both arguments, though. Interestingly, it’s the same gap:

What are people supposed do with their lives?

The AI debate is a process and tools question. I'm asking a "why" question: What should people strive to fill their lives with, from daily activities to long-term accomplishments?

I have the same question, albeit in different flavors, to both camps:

To the pro-AI camp: Ok, now what?

Once you automate all the things you can, what then? What does your life look like? AI agents are checking and answering all your emails, your secret AI persona bot is secretly replacing you in all work meetings, and you've got agents spraying AI slop in your tone of voice across all your socials on a regular (but seemingly organic) cadence. What do you now do with all your free time? And if it matters, what does your ideal world look like?

It truly reminds me of a certain type of penny pincher. The ones who try to find any excuse to save a little money here and there, but their hoard of wealth often isn't earmarked for something special; they've just gotten addicted to the habit of saving money that they forgot money is supposed to be spent on something.

Now just replace money with time and that's how I see the AI pattern unfolding.

Saving money and using AI are not bad things. But hyper focusing on saving risks losing sight of the "for what?"

But that brings me to today. I had some unexpected extra time and came across the abacus again. Some quick “how-to” searches and a bit of fiddling, and I’m re-experiencing a familiar, but almost foreign joy of learning something completely new for the first time again. I’m literally re-learning how to add, subtract, and multiply in my late 30s.

Beginner’s mind is underrated

As I’m enjoying being in the fun/frustrating zone of being intentionally dumb again, I realized there were a few things I had been missing.

My life is probably like yours: full of a constant barrage of information that I have to do my best to filter something useful out of in order to be a somewhat productive person.

When I have time.

I rarely allow myself to enter the realm of the complete novice. Learning something almost entirely outside of my current experience, struggling through the basics and giving myself the opportunity to fail repeatedly before making some progress, and basking in the unique glow of that post-struggle eureka moment. All for something that I didn't have to do, but wanted to.

Of course, my recent explorations in AI tools made the lack of these feelings more obvious, but I'm not going to blame AI for their absence entirely. I'd unintentionally settled into maintenance mode, "just get by," years before most people had ever heard the letters G, P, and T in sequence.

But AI definitely sped up my descent into brain-death, even as I was aware of the risks. Which made me really think through the conversations around AI that we all hear day in day out.

The noise surrounding AI

There seem to be two camps of AI thought-peddlers: On the pro-AI side you have the hype fanatics going "Imagine how godly you will be armed with AI, and how behind you are and will be once everyone else learns it!"

On the other end, you have the catastrophic worriers claiming "AI will steal our knowledge and humanity, you'll forget how to think and do things!"(Exaggerating to highlight the endpoints of the spectrum, not the average perspective)

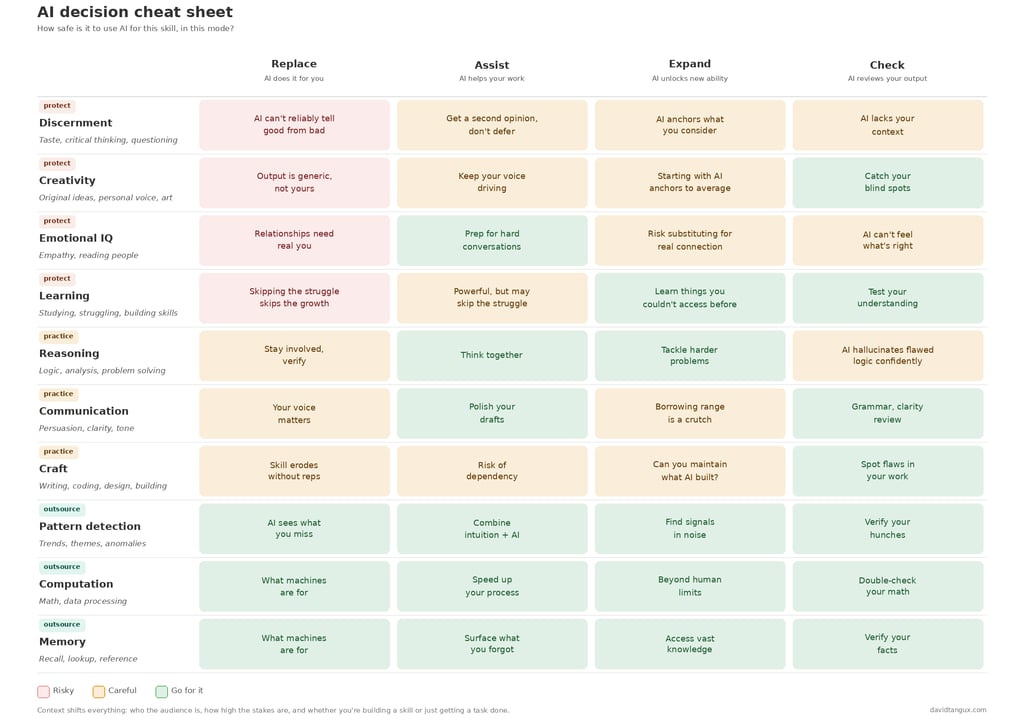

So if you're thinking about using ChatGPT to compose a work email, you could go to the chart, go down to communication, and see that it's probably fine to use it to help you plan, write, expand, or check your own writing, but to the extent that you want to have your own voice represented, probably shouldn't fully let the AI do the talking.

Answering the big question for yourself

As for the "so what" question, I highly suggest you start by removing AI and any other tools from the equation.

It's a solution finding a problem to solve.

I'm not deluded enough to preach a course of action that applies to everyone, but I do have some good questions for you to ponder: What gives you a sense of purpose, a feeling of joy, and the ability to create value for others?

These are not unique questions or concepts, but I see one or more of them noticeably absent in every conversation about AI.

Once you have some real answers for yourself, the tooling question becomes almost easy. For the things that check all the boxes, protect those parts of yourself even if you start incorporating AI and other tools in the mix. For the things that don't? You can probably worry less about them!

In the meantime, I'm going to stop making this article longer and figure out how the hell you do division with an abacus.

My reaction was “wow, you really get me!”

It’s not that I’m a Luddite. I used my TI-83+ all the way up until cell phones and computers made them redundant.

But I like gadgets. And old timey things. And objects with a high fiddle factor.

So obviously, this would be my new obsession, right?

Well, not for a year or so. It just sat there for a while as the chaos of life took over. You get it. Work, child care, the… world, I guess? Vague gesture at everything...

To the anti-AI folks: What are you protecting?

What specifically do you think is important enough that only humans should EVER touch it? Is it creativity? Critical thinking? Self-expression? If AI were to take everything but one thing, what would you want to save, and why?

Don't get me wrong, I get the fear of losing myself. The brain is like a muscle, and muscles deteriorate with even short stints of neglect. I had shoulder surgery 20 years ago and after being in a sling for 2 weeks, my arms to this day are not the same size.

What happens when we neglect parts of our brain in favor of automating or even enhancing with AI? As I write this article with my human fingers, I'm constantly tempted by the possibility of getting AI to help with the next paragraph, and feel the nagging fear that I'm not as good at writing as I was 6 months ago.

When I was fumbling around with that abacus, I wasn't accomplishing anything that would give me super clear value. We all have tools much faster and more accurate that I ever will be with an abacus. Hell, I can do mental math for simple enough problems faster than I can do them on this gadget.

And yet the process of doing that was doing things for me that no AI tool replicates out of the box: the feeling of learning a skill. The struggle that is absolutely necessary to accomplish it. And the feeling of success afterwards which is unique to using your own brain to build a new capability, and very much diluted when something does it for you.

But here's where I think the anti-AI argument goes a little too far, at least when it's a blanket statement of "avoid AI." It basically says using a tool means you lose something about yourself. It sure can happen, but it depends on how and in what context you use it. If you work in IT and you use an auto-email response to basic questions with "Have you tried turning it off and on again?" I'd call that smart automation. But if you work in IT and AI is now responding to your mom's emails for you, you might have lost something along the way.

So where do we draw the line?

You may have noticed I didn't touch on the anti-AI fear about AI taking jobs. I already wrote about that here in 2023.

A NEW-ISH FRAMEWORK

I've seen a lot of "AI will never do this!" lists and "here's all the skills AI will take over" charts. Those all feel... incomplete. They're too simple, and don't cover the contextual nuance of daily life. Plus, they're all framed from the perspective of technical capability, and ignore the human side of what you're gaining and losing from using AI in those ways.

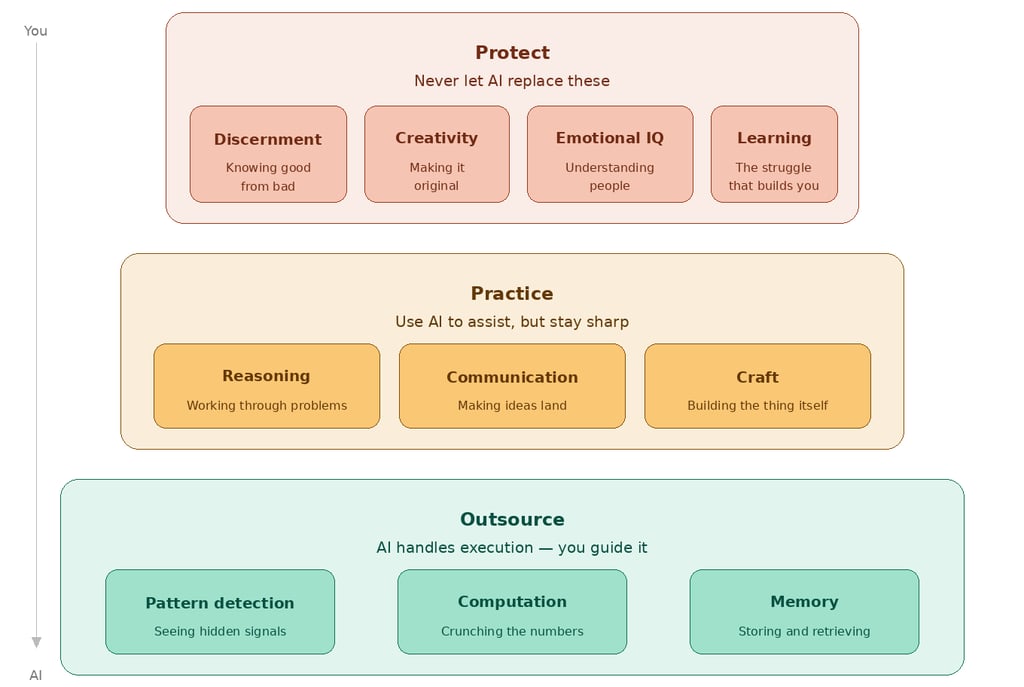

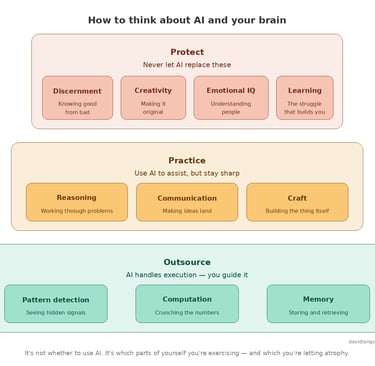

So I made a thing. In two parts: the first is a broad framework for how to think about human skills around AI, and the second is a sort of decision table to figure out the IF and HOW of using AI in a specific situation.

First, a hierarchy of broad human skills. Think of it as a breakdown of the things that you do with your brain, from the simple things machines can also do all the way up to the things unique to you (Inspired by Bloom's Taxonomy, for those interested).

I sorted all the brain stuff into 10 broad "skills," and grouped them into categories for how to use AI around those skills. Example at the bottom: memory. Humans suck at it, as a general rule. It's much more reliable to have technology be the storage and retrieval mechanism that you direct to get what you want. You still need your memory, but you can also create systems that will outperform yours.

Then let's look at the top of the list. What I'm calling discernment is your human judgment about what is a good idea. Almost by definition, AI doesn't do this, and we probably shouldn't try. When you set an AI loose to make decisions without you shaping it in some way, that's when it feels like we've given up, no?

You could use this framework on its own if you need a gut check. But it's still missing that contextual nuance, of how you're using AI even within these skillsets (remember the AI mom emailer?).

So I made a cheatsheet. Using this framework as a starting point, it expands into different types of uses, from using AI to replace your skill (i.e., fully automate) to having it assist (example: preparation), unlock something new (example: brainstorming, remixing), or just check your own output (review, edit, etc.). These are color coded to give you a more nuanced take.